Assembly Versioning and DLL Hell in C# .NET Framework: Problems and Solutions

In this article, we'll talk about what exactly is DLL Hell, how these kinds of problems can occur, and the best ways to dealing with them.

Understanding How Assemblies Load in C# .NET

We are constantly dealing with libraries and NuGet packages. These libraries depend on other popular libraries and there are a lot of shared dependencies. With a large enough web of dependencies, you'll eventually get into conflicts or hard situations. The best way to deal with such issues is to understand how the mechanism works internally.

Is C# Slower Than C++?

Is C# slower than C++? That's a pretty big question. As a junior developer, I was sure that the answer is "Yes, definitely". Now that I'm more experienced, I know that this question is not obvious and even quite complicated.

C# to C# Communication: REST, gRPC and everything in between

There are many ways to communicate between a C# client and a C# server. Some are robust, others not so much. Some are very fast, others aren't. It's important to know the different options so you can decide what's best for you.

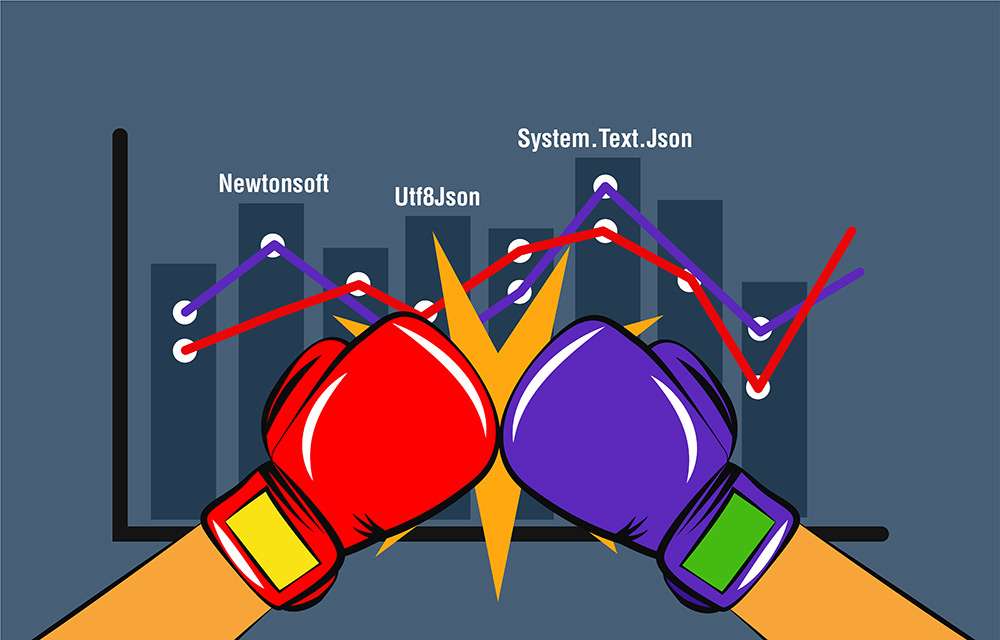

The Battle of C# to JSON Serializers in .NET Core 3

.NET Core 3 was recently released and brought with it a bunch of innovations, including a brand new JSON (de)serializer System.Text.Json. We're going to compare this serializer with Newtonsoft.Json and other major .NET serializers. Check out this epic performance battle.

Pipeline Implementations in C#: TPL Dataflow Async steps and Disruptor-net

In the 3rd part of the series we'll see how to create asynchronous steps in the pipeline with TPL Dataflow. We'll also see a new implementation using the Disruptor-net library.

Pipeline Pattern in C# (part 2) with TPL Dataflow

In the First Part of the series, we talked about the Pipeline Pattern in programming, also known as the Pipes and Filters design pattern. In this part, we'll see how to implement such a pipeline with TPL Dataflow.

Pipeline Pattern Implementations in C# .NET - Part 1

The Pipeline pattern is a powerful tool in programming. The idea is to chain a group of functions in a way that the output of each function is the input the next one. The concept is pretty similar to an assembly line where each step manipulates and prepares the product for the next step.

Extension Methods Guidelines in C# .NET

Extension methods are awesome, right? They are probably most widely used in the LINQ feature. But when should we use them? And when shouldn't we? Let's talk guidelines.